As AI continues to develop, so does Natural Language Processing (NLP) – from simplistic spell checkers to superior chatbots that reside in our modern world. Everything changed for the NLP world with the introduction of Large Language Models (LLMs), such as GPT-4, over the past few years. LLMs completely changed the scope of language understanding and generation. This article reveals the differences between LLMs and traditional NLP based on their structures, functionalities, domains of application and weaknesses.

Table of Contents

Understanding Traditional NLP

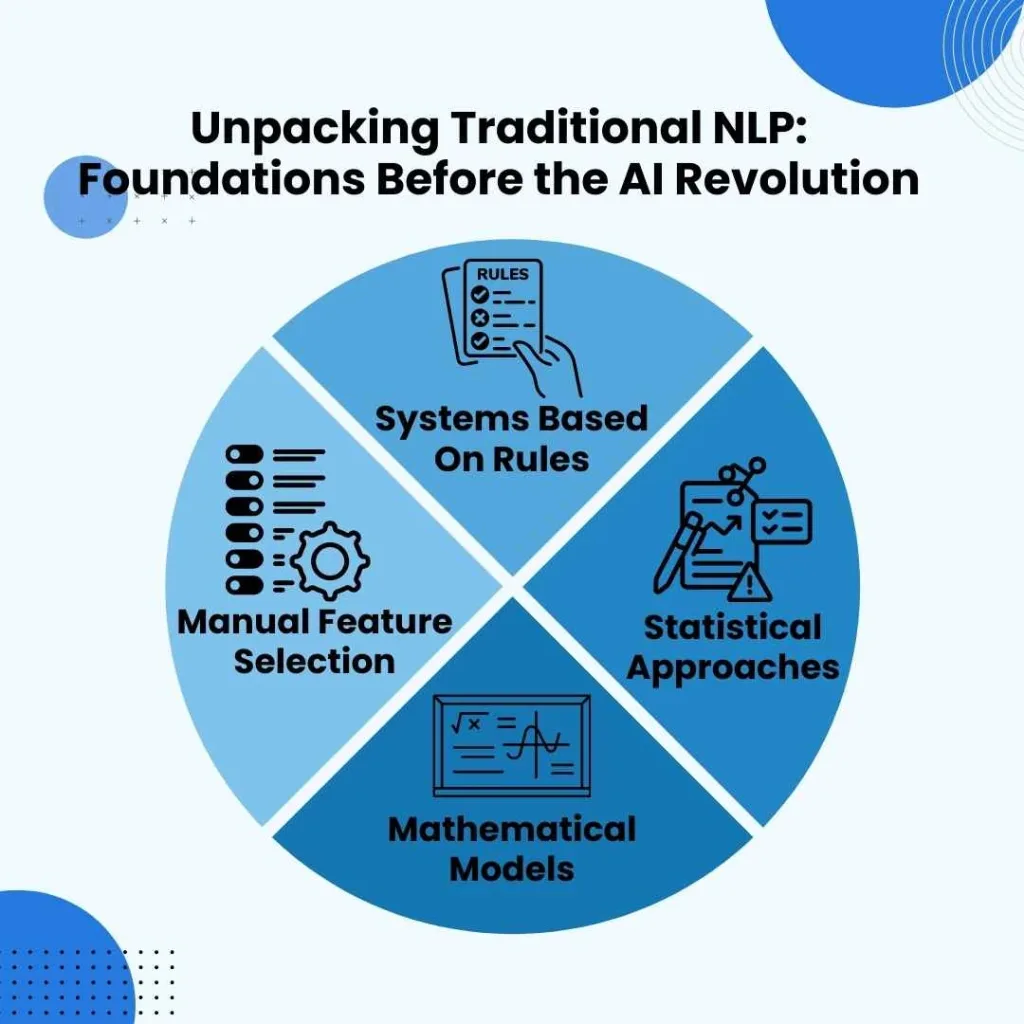

Conventional natural language processing (NLP) denotes the approaches employed for understanding and interpreting human language before the development of deep learning-powered large models. These techniques were chiefly based on:

- Systems Based On Rules: Along with machine learning, more complex statistical methods such as Hidden Markov Models (HMMs) and Conditional Random Fields (CRFs) came on the scene and were utilized in part-of-speech tagging and named entity recognition (NER).

- Statistical Approaches: The lower levels of an NLP model’s workflow structure require substantial manual effort to select appropriate linguistic features of words, such as frequency of usage or syntactic structures, to refine the model’s functionality.

- Manual Feature Selection: Traditional NLP involves a considerable amount of manual work in picking the right linguistic elements of words e.g., frequency of usage, syntactic features, etc. to optimize the performance of the model.

- Mathematical Models: TF-IDF and Latent Semantic Analysis (LSA) were approaches that sought to quantify the similarity between words using mathematics, ignoring context and deep comprehension.

Though more conventional methods of natural language processing are productive for specific tasks, they are not particularly useful for work that requires deeper comprehension of the language and its nuances.

The Rise of Large Language Models (LLMs)

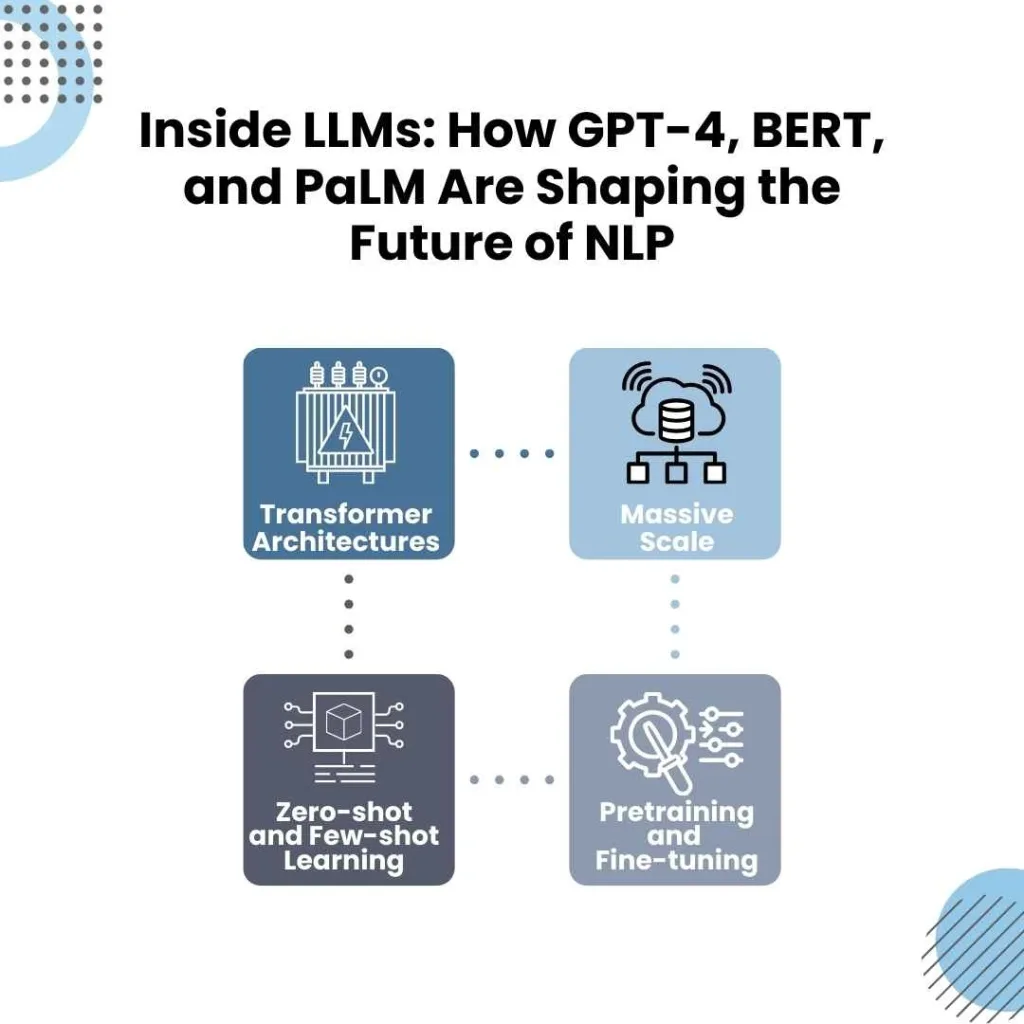

LLMs like GPT-4, BERT, and PaLM have made their mark in the world of NLP by using deep learning and vast datasets. They use the following:

- Transformer Architectures: Unlike earlier recurrent neural networks (RNNs), self-attending transformers are capable of contexts of the whole text instead of sections of it, making them better at understanding language.

- Massive Scale: LLMs learn from massive datasets which contain all types of information, even the internet, to recognize so many unique patterns, contexts, and details.

- Pretraining and Fine-tuning: These models start by pretraining on large text collections with unsupervised learning and are then specialized using supervised learning for tasks like summarization, question answering, and code generation.

- Zero-shot and Few-shot Learning: Unlike traditional NLP models that need task-specific training, LLMs can do a variety of tasks with virtually no extra effort thanks to their ability to generalize.

Key Differences Between LLMs and Traditional NLP

| Aspect | Traditional NLP | LLMs (Large Language Models) |

| Model Complexity and Architecture | Uses simple statistical models, handcrafted rules, and feature extraction. | Leverages deep learning with billions of parameters, transformers, and extensive training. |

| Data Requirements | Relies on manually labelled datasets, which are costly and time-consuming. | Trained on vast, unstructured datasets (books, articles, web pages), reducing manual annotation. |

| Context Understanding | Struggles with long-range dependencies and complex sentence structures. | Handles long-range dependencies effectively through self-attention mechanisms. |

| Adaptability and Generalization | Task-specific models that require retraining for new domains. | Generalizes well across tasks with minimal modifications. |

| Computational Power and Resource Needs | Can run on limited resources, making it practical for low-power applications. | Requires significant hardware (GPUs, TPUs) and extensive computational power. |

| Explainability and Transparency | More interpretable, and suitable for regulatory applications. | Functions as a black box, making interpretation difficult. |

| Performance in Real-World Applications | Effective for structured tasks like entity recognition and spell checking. | Handles open-ended tasks such as text generation, summarisation, and coding. |

Applications of LLMs vs. Traditional NLP

| Category | Traditional NLP | LLMs |

| Chatbots & Conversational AI | Early chatbots like ELIZA used rule-based, scripted interactions. | Modern assistants (e.g., ChatGPT, Bard) generate human-like responses and engage in complex dialogues. |

| Text Summarization | Extractive summarization, selecting key sentences from documents. | Abstractive summarization, generating new summaries that better capture meaning. |

| Machine Translation | Phrase-based statistical translation (e.g., Google Translate pre-2016). | Neural machine translation, improving fluency and contextual accuracy. |

| Sentiment Analysis | Used predefined lexicons and feature-based classification. | Understands sentiment more deeply, adapting to slang, sarcasm, and idiomatic expressions. |

| Code Generation & Programming Assistance | Could not handle programming tasks effectively. | Generates code snippets, debugs programs, and explains concepts (e.g., GitHub Copilot). |

The Future of NLP: A Hybrid Approach?

Even though LLMs have taken over the markets on many fronts, traditional NLP is still relevant and useful. One of the core strengths of explaining human language might help achieve a blend of both goals – AI systems that are interpretable with the skills to adapt to new innovative bounds. A focus on tweaking the deployment efficiency of LLM models while simultaneously penetrating some of the biases will improve future results.

Conclusion

From earlier Natural Language Processing, NLP, techniques to the newer Large Language Models, LLM, techniques, there is a clear difference in how computers comprehend and produce language. Traditional NLP techniques can be useful for some tasks, but LLMs have given rise to unmatched AI possibilities. Knowing the strengths and limitations of both approaches is critical for accomplishing any task involving NLP in any field.